Lesson Plan: AI's Environmental Impact

On applying green chemistry to daily life for high school & college students.

Hey folks, this week I have a resource for educators. Please share with the teachers in your life and feel free to read if you’re interested in what I’m doing in my classes!

Introduction

The environmental impact of large language models (LLMs), broadly referred to as generative artificial intelligence (GenAI), has received a great deal of attention in the media as well as direct political action from citizens who are resisting the construction of new data centers. However, many people are still completely unaware of the environmental impact of GenAI or are unsure how to interpret the validity of vague statements such as “every conversation with ChatGPT uses X gallons of water”. This issue is of direct relevance to younger generations, who make liberal use of tools like ChatGPT, Claude, Grok, and other GenAI chatbots for school, work, and their daily lives.

So, I’ve developed a short lesson on the environmental impact of AI chatbots for my Green Chemical Engineering class. The lesson can be completed in 20-30 minutes and involves an in-class activity followed by a short reflective homework assignment, with optional reading material for courses with strong reading components. This lesson can easily be applied to any high school or college-level science class that discusses the water cycle, environmental issues, or critical thinking.

Learning Goals

After this lesson, students will be able to…

- Understand & explain why the use of GenAI Large Language Models has an associated water and energy use

- Compare the environmental impact of GenAI to other activities, such as red meat consumption, email, or video streaming

- Assess the accuracy of a claim by carrying out a web search

- Appreciate the complexity of environmental impact assessment

- Assess their personal use of GenAI tools in daily life

Teacher Background

Massive amounts of energy and water are used in both the creation and usage of generative AI models. Creating (“training”) a generative AI model involves lots of computational power (GPU usage), which requires not only energy to operate computers, but cooling water to ensure these computers don’t overheat. This means that every upgrade to ChatGPT (e.g., GPT-3, GPT-4, GPT-4o, GPT-5) requires more of this model training.

Once a model is created, it can then be used. While one can run a smaller, “distilled” version of a GenAI model on a small scale with minimal environmental impact (more on that later), every subsequent use of models that are accessed via a web interface (ChatGPT, Claude, etc.) require additional resources (namely energy, water, & computing power) to run. That’s because these chatbots are kept on servers in various data centers which also need power to run and water to keep them cool. Accessing these tools online is how the vast majority of people are using these chatbots, so this usage is the focus of the many reports that chatbots are using up environmental resources.

With regards to power, GenAI is already using ~2% of the world’s energy, according to a January 2024 report from the International Energy Agency (IEA). 2% may seem small, but that’s more than the energy usage of all fertilizer production (~1% of global energy demand), which is used to grow the majority of the planet’s food supply. A lot of the time, the financial cost of this energy usage is being passed onto the consumer, with electricity bills rising across the country due to the energy demand of these data centers. Additionally, these data centers are not powered by sustainable forms of energy, but by fossil fuels; the Memphis, TN xAI plant that powers Grok, for example, uses gas turbines that are currently emitting plumes of “ozone-depleting nitrogen oxides, formaldehyde, sulfur dioxide, and other pollutants that can contribute to heart disease, cancer, and respiratory illnesses”. Many have argued that widespread GenAI use is increasing demand for fossil fuel usage in a time where we should be rapidly transitioning to sustainable energy. Unsurprisingly, communities of color and poor/working class communities have had to deal with the worst of these effects.

When it comes to water use, there are a few key points to remember:

- The water used for cooling must be clean water. This is because water that contains salt or other contaminants makes it less effective at heat transfer or can corrode/damage the pipes, pumps, etc. that move the water through the data centers. This means that data centers are inherently taking either drinking water or the water used for crops from local communities.

- “Used” generally means “evaporated” in this context. Water is being used as a heat exchange fluid to transfer energy from a hot computer/server to the water, keeping the server at a steady temperature while heating up the water, usually to the point of evaporation. These water vapor emissions themselves don’t have an impact (water is not a greenhouse gas or ozone depleter), but displacing clean drinking water into the sky has an impact because it is taking it from other life forms who need it, and it disrupts the natural water cycle.

- Taking massive amounts of water from a single source can have other side effects, such as hurting aquatic populations or displacing sediment into local water supplies. For example, a Georgia woman who lives next to a Meta data center has been unable to use her own tap water ever since the proliferation of everyday AI tools.

- Generating images or videos using GenAI is orders of magnitude more resource intensive than generating text. One study suggests that generating a single 1024x1024 pixel image requires the equivalent of running a microwave for 5 seconds, while generating a 5-second video requires the equivalent of running a microwave for an hour. Worse, a September 2025 study found that the environmental impact doesn’t scale linearly: doubling the generated video’s length quadrupled the energy demand. (This is particularly relevant as Meta’s Vibes and OpenAI’s Sora 2 apps have now launched!)

- Note the difference between water withdrawal versus water consumption. From OECD.AI: “Water withdrawal refers to freshwater taken from the ground or surface water sources, either temporarily or permanently, and then used for agricultural, industrial or municipal uses. On the other hand, water consumption is defined as “water withdrawal minus water discharge”, and means the amount of water “evaporated, transpired, incorporated into products or crops, or otherwise removed from the immediate water environment”.

While all of this is concerning, there are a few important caveats to this analysis:

- AI usage is highly context-dependent, and its resource intensiveness can’t always be boiled down to a single heuristic/catchphrase like “every prompt uses X mL of water”. Every prompt uses a different amount of tokens, and some prompts inherently use more resources than others (think about how a simple question with a sentence-long answer may use less energy than generating a 5-paragraph essay). Metrics such as “2 liters for 50 prompts” or “one 500mL bottle of water for every 100-word email” are accurate, but they represent averages, making them somewhat practical but not universal.

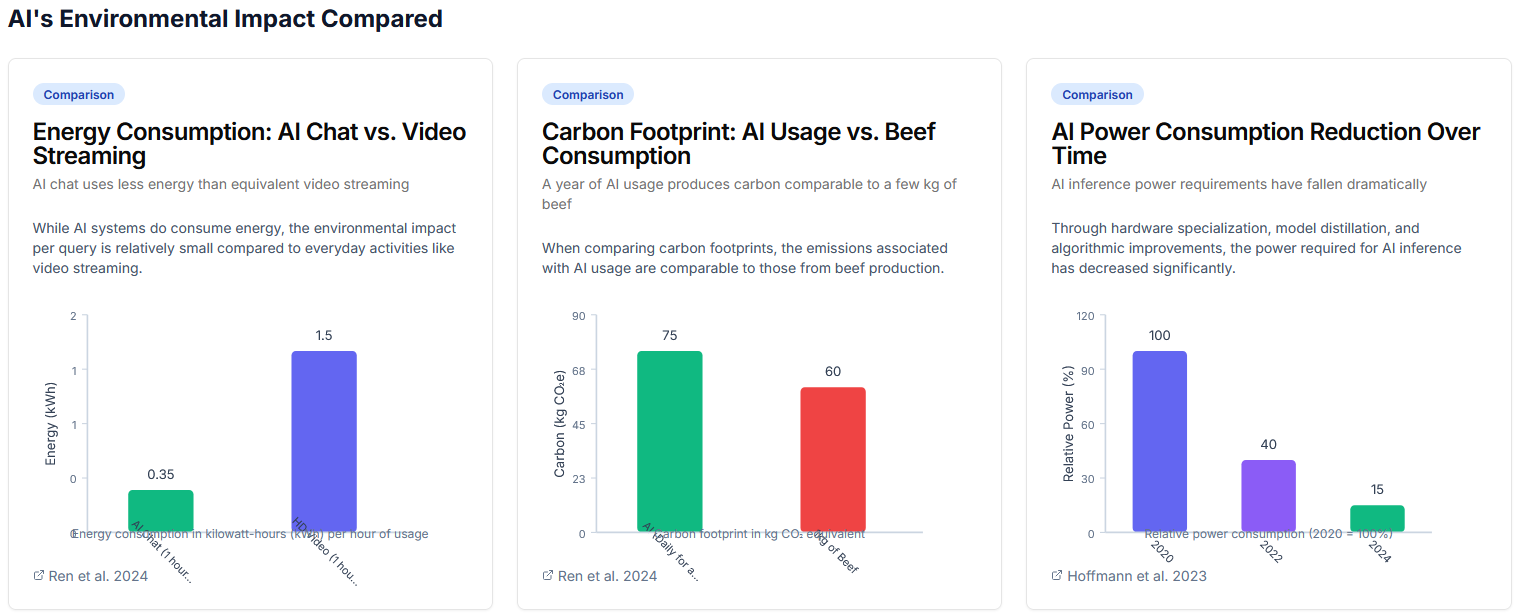

- All data center use requires this resource consumption, not just GenAI chatbots. For example, video streaming (YouTube, Netflix, etc.) requires much more energy use than ChatGPT. Even old emails or photos sitting on Google Drive are taking up data on a server somewhere. (Also, other everyday activities such as beef consumption have a large environmental impact as well, though this is venturing into whataboutism and the broader question of whether an individual’s carbon footprint is relevant when industries are the biggest polluters.)

- The resource consumption of GenAI will go down with time, and in fact already is. Initially, it was thought that models had to get bigger and bigger every year to keep up with technological progression, but we’re starting to find that smaller models can be just as effective. Additionally, data centers could transition from fossil fuels to renewable energy eventually, reducing the harmful energy impacts. (That said, we’re living in the here and now; even if GenAI’s impact is lower in 20 years, that doesn’t help the people choking in communities like Memphis today!)

- Casual, less necessary AI usage (e.g., asking ChatGPT what to have for dinner) should be totally separated from AI as a research tool (e.g., using AI to study drug development). In fact, using AI to study climate change, energy optimization, and resource efficiency can very much help the environment.

- You can run a small, distilled LLM like gemma3-1b on a Raspberry Pi or even your personal laptop, assuming it has enough RAM (>16GB). This will result in no water usage and only very small electricity usage, though the AI will be a lot “dumber” than the full versions of ChatGPT, et al. running from a data center. (This could be a cool activity for high school students!)

With all of that said, it is still crucial for students to understand why their GenAI use has an environmental impact. That way, they can make an informed decision about how to use it in their daily lives. Learning about AI’s impact also teaches students critical thinking skills; going through the process of assessing claims like “every ChatGPT prompt uses a glass of water” teaches them about everything from how technology works to how catchy slogans can be more persuasive than raw facts.

I’ve done my best to explain how GenAI works here, but if you’re still totally lost, watch my video from last Fall.